Optical computing, once a hot research topic a couple of decades ago, has emerged again as a promising technology — this time backed by artificial intelligence.

Aydogan Ozcan, the Volgenau Professor for Engineering Innovation at UCLA Samueli School of Engineering, and colleagues have outlined in a Nature article recent advances in artificial intelligence and their impact on visual computing applications. The emerging research area suggests that AI inference based on incoming light moving through an optical device can play a key role in new visual-computing technologies and capabilities that require little or no power to run. The article’s co-authors are researchers from Stanford University, the Massachusetts Institute of Technology, École Polytechnique Fédérale de Lausanne in Switzerland, Sorbonne University in France and the University of Münster in Germany.

According to the authors, optical computing, which uses photons instead of electrons to perform computations, has shown potential over the years. However, limited applications and technological hurdles led to a decline in enthusiasm from its heyday in the 1980s to waning interest in the 1990s.

While several advances were made in the ensuing decades in developing optical computing platforms, challenges still remain for the technology to evolve into a practical, general-use system. According to the researchers, however, one bright spot has emerged in more recent years.

Beginning in the 2010s, the major success of deep neural networks — a type of artificial intelligence commonly known as deep learning that uses a series of layers and nodes to process information — has offered a vehicle for emerging applications in optical computing. Some familiar commercial products that could utilize deep-learning technology include autonomous cars, robotic vision, smart homes, remote sensing and medical imaging. AI-based optical systems in these applications could enhance the capabilities of a regular electronic computer by using information in the incoming light to rapidly analyze objects and their surroundings. Such hybrid computing systems could combine the speed and parallelism of optical computing with the flexibility and maturity of electronic computing. A big test remains in making such systems energy efficient without compromising performance.

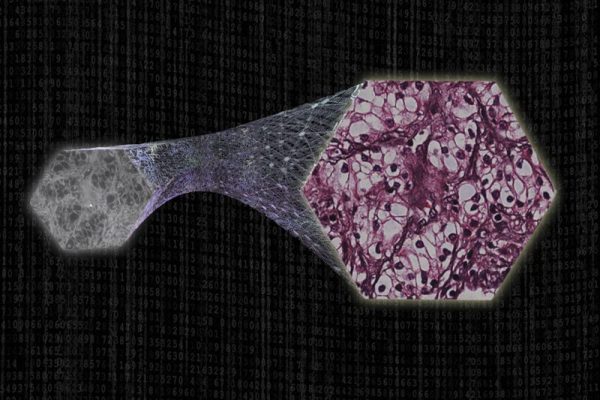

Ozcan has led groundbreaking research in developing an optical neural network in 2018 that can instantly process and identify objects without needing external energy except incoming light, and a follow-up study showing major improvements to the concept. He has also spearheaded efforts on using artificial intelligence in medical imaging, such as building comprehensive 3D images from 2D images of living cells and tissues, and transforming low-resolution microscopic images into dramatically higher resolution and more detailed ones. These concepts could lay the foundation for a “thinking microscope” as depicted in the Nature article.

Ozcan holds faculty appointments at UCLA in electrical and computer engineering, and bioengineering. He is also the associate director of California NanoSystems Institute (CNSI) and an HHMI Professor with the Howard Hughes Medical Institute.